The Agentic Flattening

Twitter caused a real problem for the world.

They “flattened” different contributors (experts, amateurs, trolls) into one feed with the same visual weight. You lost the contextual cues (credentials, domain, intent) that normally help you judge an information signal.

The world’s foremost expert on infectious disease, economics, or childhood education could tweet something, and right below it, with a very similar bordering, font, and language, would be a tweet with a plausibly sounding opposing position, leveled with similar confidence. The only problem is, it was put forth by someone’s non-grass touching, conspiratorially-minded Uncle Joe. How could the user tell who was right?

This phenomenon is not limited to social media. We’re seeing this same monkey-brain quirk in the AI vendor world.

Comparing AI Systems

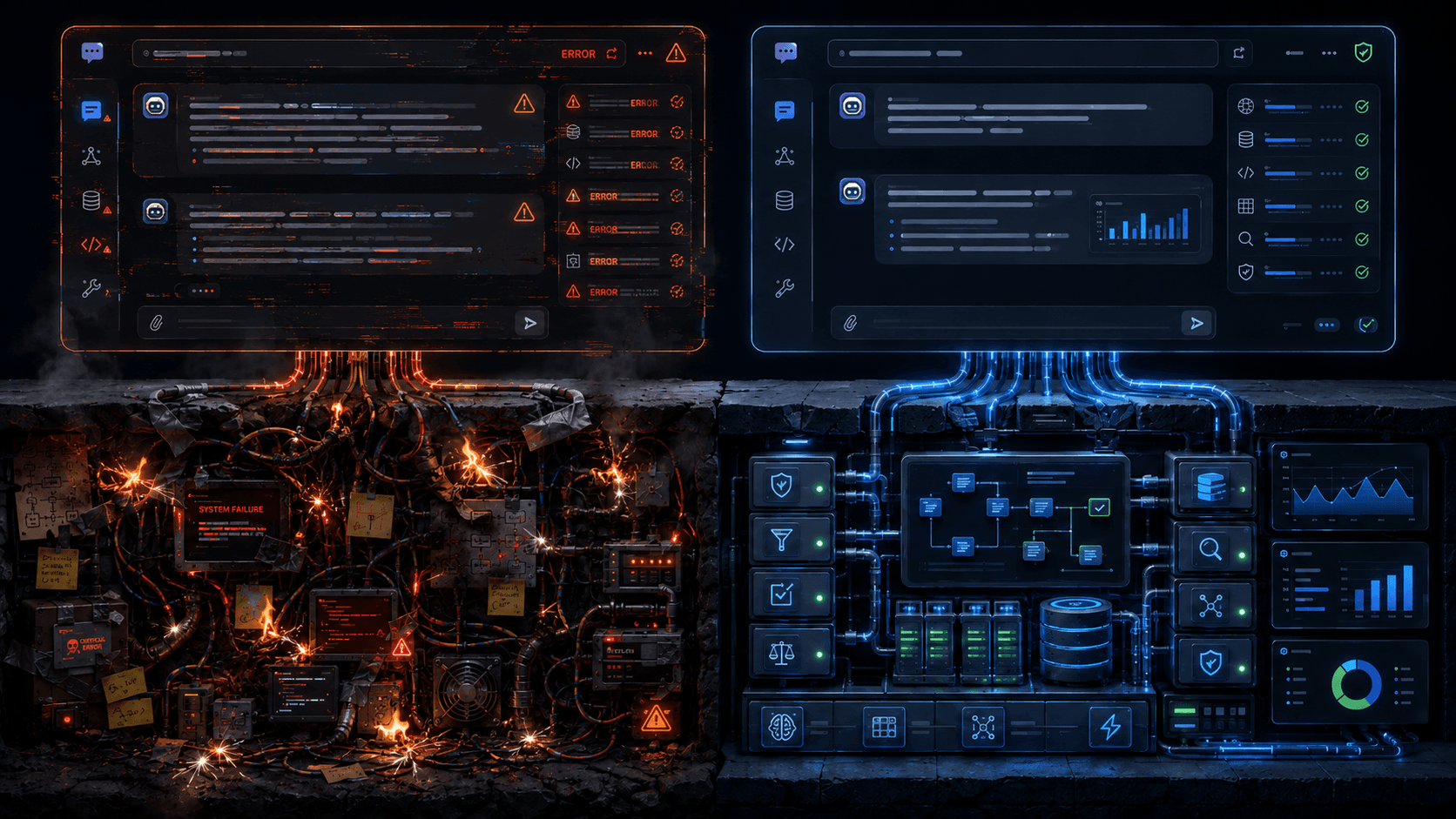

Both Agentic System A and Agentic System B both produce a wall of text, streamed with some visible thinking steps or tool calls dispersed, with similar visual effects. The results, visually, look fairly identical. Undiscerning buyers can’t tell the difference, right off the bat.

What about the discerning buyers, can they tell the difference between the contents?

There is a measurable difference between a mature, purpose-built harness, trained (skilled), with a vast knowledge base, deterministic tools, and connected to context, with memory, intelligent caching, graceful error handling, benchmarked to death every day – and whatever a Claude Code in your terminal spits out one day.

I’ve heard lots of takes on LinkedIn/HN saying things like, “both products can call MCP servers so they are the same thing”. That, to me, sounds like “well both these products use APIs, so they must be indistinguishable.”

So, isn’t the solution to this problem obviously benchmarks? It could be -- the good news is that in our space, we’re not remotely close to benchmark saturation.

Public Benchmarks Are Mostly Marketing and Definitely Misleading

The ecosystem doesn’t reward making public benchmarks.

First, open benchmarks lose their value over time. Samples will work their way into the weights themselves in new model releases. Also, models, when they realize they’re under test, often make funny decisions that diminish the value of their results. Incidentally, we’ve seen a noticeable uptick of this “I know I’m being tested” behavior with gpt-5.4+ and Anthropic 4.6+. It is annoying since it means we have to make big changes to our benchmarks and harnesses.

The benchmark itself is a roadmap to make the industry-leading product. You only know how to get to the answers if you first develop these tests. If you then release the test, competitors can and will use it to efficiently drive their investment and catch up at a much faster pace than it took you to get there. So, you own the benchmark creation and maintenance – and your fast-following opponent just has to worry about overfitting to the test.

We’ve seen this pattern a few times. A company launches with exciting benchmarks published, it gets marketed heavily, and then they throw it away / take it private / stop contributing, after the core mission – marketing – is accomplished.

Private Benchmarks Are Not Verifiable

At Pixee, we have several different benchmarks to test different components of the system individually, as well as end-to-end benchmarks. These benchmarks require a complete software factory of their own. We build and maintain special tools to monitor their behavior, generate, visualize, and debug samples – and much more. We get annoyed when they need constant maintenance. But, paying this tax is the only way we know these things work, so it’s worth it.

We don’t publish them because we spend a lot of time on them, and I want my competitors to also have to spend that time, and to have to develop their own taste that will allow them to make the agents useful for the enterprise. No one else in the world has spent anywhere near as many man-years on this problem as we have. I can’t give that out, right?

But, where does that leave customers? I am very straightforward with them. I’ll tell you my benchmark scores, but that shouldn’t mean much to you. I can flood a benchmark with samples that are trivially easy to pass. I can make that score say whatever I want. I always tell people we have a mix of easy things, subtle things, and hard things. We also have “arenas” that test the depths of our inductive and deductive learning about customer-provided context. Many of them have secondary endpoints that are very specific to our tooling, so they wouldn’t make sense as public artifacts.

I supposed I could invite folks to the “factory floor” to show them benchmarks – I haven’t tried that yet!

So, Where Does That Leave Customers?

This means customers would have to prove the depth of all dimensions in every proof-of-concept we do, in order to understand the distance between us and competition, including whatever DIY samples they have. We have to get a lot more prescriptive in order to show the differences, and customers generally want you to “give me the thing then let me play with it.”

Living Through the Flattening

The mechanism is the same one Twitter introduced: strip the contextual cues that let a person judge a signal, and the signal that wins is the one with the lowest barrier to entry, not the one that's right. On Twitter, that barrier was cognitive — the take that conflicts with your priors the least, the one you don't have to update anything to accept, is the one that we accept. In agentic software, the barrier is more organizational — the DIY effort being pushed from above, whatever tooling is already hanging around, whatever's easier politically to get through procurement. Different barriers, same adverse selection pressure.

I have total confidence the market will sort it out, and total confidence it will be inefficient. Many buyers along the way will be genuinely convinced their DIY effort or their chosen vendor is the right answer, when it isn't.