The Battle of AI Wrappers vs. AI Systems

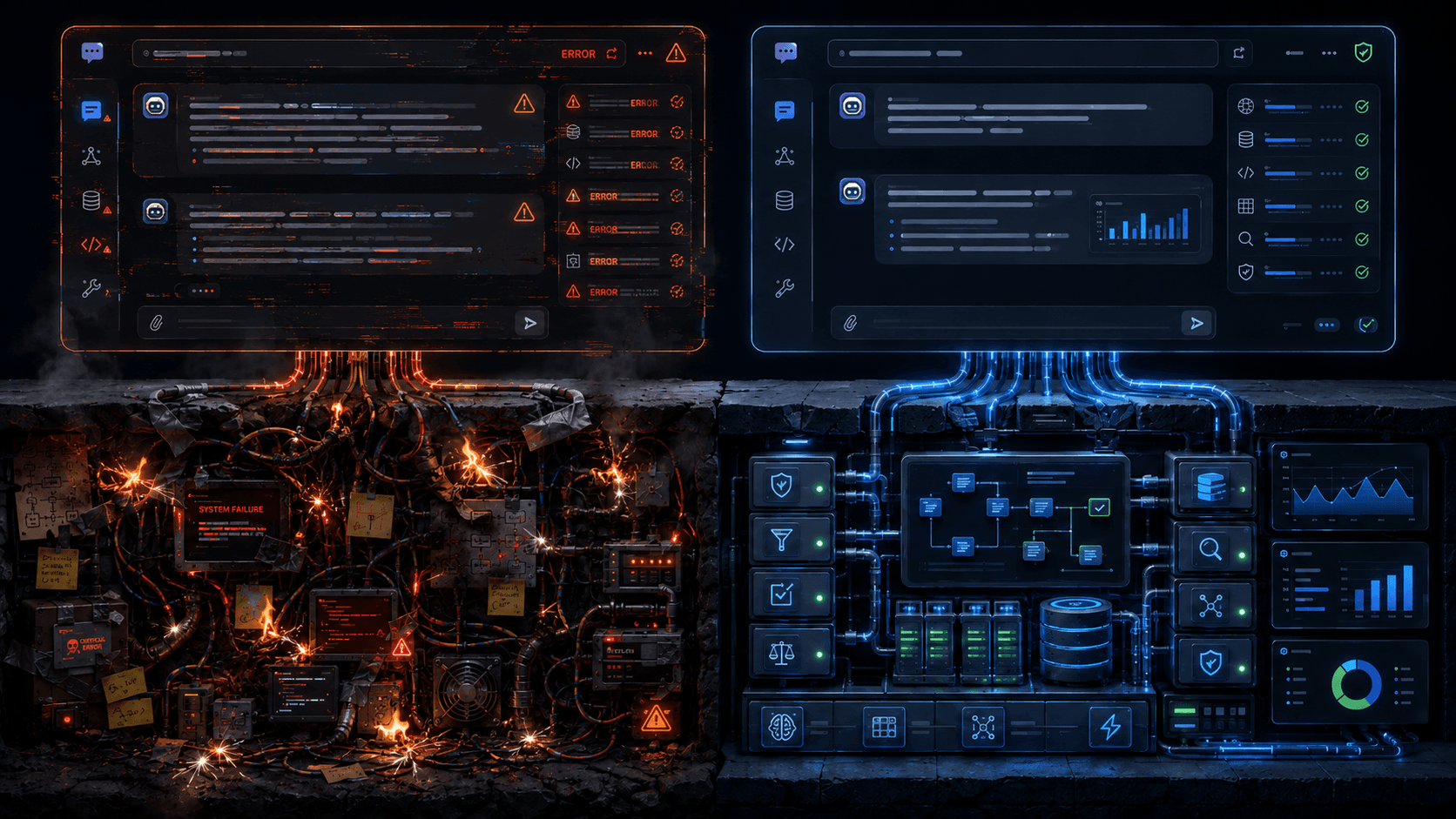

To do vulnerability triage, we use a number of tools: composable agents, workflows, zero-shot LLM calls, deep research, knowledge bases, code analysis tools — you get it.

But, does any of it matter? We need to know if a simple “AI wrapper” from some competitor is going to get “good enough” results.

We decided to put it to the test.

We took all of our fancy tools, and put them on the sideline for a moment. We gave a SOTA reasoning model the same inputs and tools, and benchmarked the results on a high-performing SWE agent with what we think are good prompts for this task.

The Benchmark

Our benchmark data is a human-labeled mix of real-world open source vulnerabilities, anonymized examples from consenting customers, hand-crafted cases, and synthetically generated cases. It has easy cases and hard cases, and some are intentionally not decidable with just the inputs given.

We benchmark relatively concrete things (e.g., “ is it a false positive?”) and slightly fuzzier things like “is this vulnerability actually higher or lower severity than reported?”) In fairness to the wrapper, we made it easier to “pass”, and gave it some grace on the fuzzier things we measure, even though we think classifying a severity correctly, the way an enterprise does — is very important, as it drives real policy inside our customers.

So, enough already — how does it do?

Purpose-Built AI System Beats Vanilla SOTA Model By Some Distance

I’m very happy (for ourselves and the wider AI startup ecosystem) to report that moats still exist in the AI world. We performed significantly better than the “naive” SWE performance on classifying multiple aspects of a vulnerability. Note that we have many vulnerabilities that are hard to adjudicate

Our agent achieves an 89% classification accuracy on our benchmarks, while the naive agent gets around 51%.

On review of where it succeeds, we definitely notice a few patterns. It gets more of the easy, single file stuff (but not all of it), and definitely trends towards failing on the trickier cases. It tends to elevate risk where there isn’t. It succeeds when detailed knowledge of frameworks is less important to the right classification. We could dig into the data much more and try to answer questions like — does it tend to do well on the true positives, but fail on the false positives? What rule classes does it have trouble classifying?

Why Do We Outperform?

The models alone are still just not great at AppSec. Deep, nerdy aspects of vulnerabilities and exploitation specifics in the space are just not “second nature” to the weights. What combination of flags disable external entity resolution Java XML parsers (quite tricky), what types of jQuery APIs actually execute inputs, etc. There is benefit to a great knowledge base, using workflows instead of agents where appropriate, enumerating hard conditions for classification matrices, using Exploit Verification, etc. If I’m forced to speculate on why, it’s probably because there’s way more chatter about “Why did the Allies win WW2?” in the training data than there is about these esoteric security issues.

The models’ safety alignments prevent taking firm positions. Some code vulnerabilities are inconclusive, because there isn’t enough data in the codebase to make a classification. So, you must offer a model this option. But, as I’m sure you’ve seen in your usage of ChatGPT, LLMs have a hard time resisting the tendency to sit on the fence — i.e., “it’s probably nothing, but consult a a doctor!”

Well, in this case, we are the doctors, and there is no one else to call. We must reach a firm, defensible conclusion in as many situations possible.

Great, But Solution ≠ Analytics

Of course, there’s lots of other values of our platform besides the raw triage analytic accuracy, but being the best in the world at this is our table stakes. To be the World’s Best Fix Tool, you have to be the World’s Best Triage Tool.

And the best platform of the future isn’t only about what you do with the code. The future is building a Resolution Layer for your company that dynamically pulls in the right context from your systems, and plugs into your workflows the way you want. It’s got to have both inductive and deductive learning capabilities. It’s got to have a lot of things.

More to come on that in the next few weeks!